Research

Modeling language structure, acquisition and use

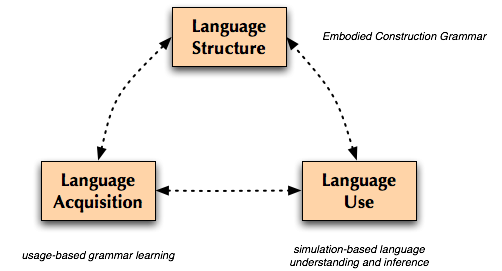

How can people and machines learn and use language? The goal of my research is to address this question by building integrated models of language structure, acquisition and use that are both cognitively and computationally motivated. To do this, I draw on ideas and techniques from computational and cognitive linguistics, artificial intelligence, cognitive modeling, and developmental psychology. More specific areas of focus include (embodied) construction grammar, natural language parsing and analysis, lexical semantics, information-theoretic and Bayesian models of learning, knowledge representation, and neural and connectionist models of computation.

My work is part of the Neural Theory of Language

project, whose modest goals include understanding how language and

cognition work, and how both of these are grounded in the brain.

My doctoral dissertation (available here) describes a computational model of the transition from single words to early multiword constructions that instantiates each of the components above. (Adviser: Jerome Feldman)