This page describes my preliminary work in looking at recovering speaker identity from spatial cues derived from the multi-microphone recordings made in the meeting recorder project. Specifically, I am looking at the cross-correlation between the two microphones mounted on the dummy PDA. For sound coming from a single source, this cross-correlation should have a maximum at a time skew corresponding to the difference in arrival time of that source's signal at the two microphones. This maps to the azimuth angle of the speaker relative to the axis passing through both microphones.

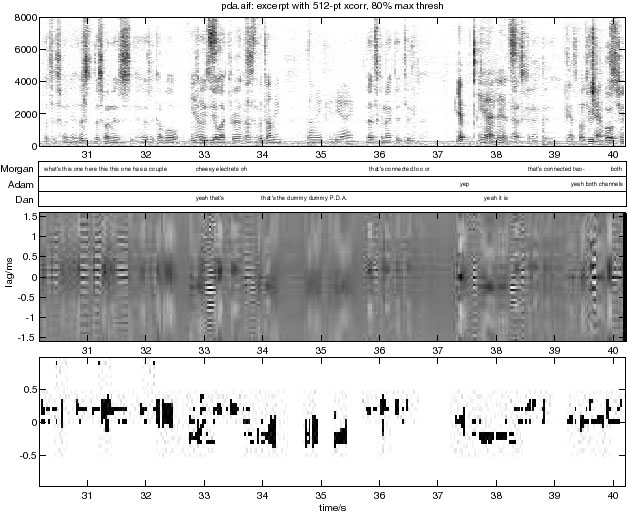

I extracted one minute of audio from close to the beginning of the first meeting we recorded, and built a stereo sound file from the left and right dummy PDA microphone channels. I then read this into Matlab, and calculated the short-time cross-correlation on 50% overlapped 512 point windows extracted from each channel. The microphones are about 10cm apart, so the largest possible time skew between channels would be about 0.3 ms, or ± 5 samples at the 16 kHz sampling rate. Thus, I plotted just the middle 50 points of the cross correlation function. In the figure below, the top panel is the spectrogram of the sum of both channels along with an approximately-aligned transcription. The middle panel is the central region of the cross-correlation function (normalized by short-time energy), and the bottom panel applies a threshold, showing black pixels where the cross-correlation was above 80% of its peak value at that instant, for frames above some energy threshold set to exclude silent regions.

(You can click on the image to hear the original stereo audio file. Use headphones to get a stereo image).

The meeting was held with Morgan sitting to the PDA's left (positive lag), Dan to its right, and Adam more or less mid-axis (for a lag around zero). What we see from the figure is that there is definitely a visible correlation between the actual speaker and the dominant peak in the cross-correlation. Moreover, a very simple thresholding scheme goes quite a lot of the way towards usefully discriminating the voices from the separate directions, although it is pretty noisy and not particularly precise.

Another thing to note is how much overlap there was between speakers even in this short excerpt. Admittedly this was from the beginning of the meeting when the participants were still trying to figure out what was going on, so hadn't fallen into the most natural conversation style. But at the same time, I suspect it is not that unusual, and I don't think the participants felt that they were interrupting one another.

Back to DAn's meeting recorder page - DAn's homepage - ICSI Meeting Recorder homepage - ICSI Realization group homepage